Please note: Robots ended on 15 April 2018. To find out what exhibitions and activities are open today, visit our What’s On section.

Here are eight things I learnt about both the history and the future of human-robot relations, from the extremely clever Professor Danielle George, Dr Adam Rutherford and science broadcaster Dallas Campbell.

Robots have been around for even longer than I realised

Our Robots exhibition charts the 500-year quest to replicate ourselves in robotic form, but Adam Rutherford gave two examples of robots being imagined in Homer’s Iliad, which is over 3,000 years old.

In the story, Thetis goes to Hephaestus to ask him to make a replacement set of armour for her son Achilles, and finds him ‘hard at work and sweating as he bustled about at the bellows in his forge.’ Now, putting considerations of heel protection aside, Hephaestus sounds like a pretty decent metalworker.

So much so in fact, that Homer imagines:

‘He was making a set of 20 tripods to stand round the walls of his well-built hall. He had fitted golden wheels to all their feet so that they could run off to a meeting of the gods and return home again, all self-propelled—an amazing sight.’

Not only that, but Homer then goes on to describe what Austin Powers would have called a ‘fembot’, but what we would more politely call a gynoid:

‘Waiting-women hurried along to help their master. They were made of gold but looked like real girls and could not only speak and use their limbs, but were also endowed with intelligence and had learned their skills from the immortal gods. While they scurried round to support their lord, Hephaestus moved unsteadily to where Thetis was seated.’

Robots! In Ancient Greece!

Ok, so it was only the idea of robots, but I was genuinely thrilled to know that such a detailed level of thought around automata was kicking around a good few thousand years ago.

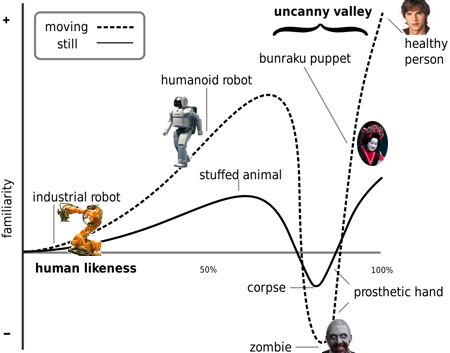

The uncanny valley

Not a twee place-name in middle America, but rather a scientific concept. The uncanny valley describes the dip in emotional response when we encounter something that appears to be human but isn’t quite ‘right’. The more we encounter the ‘human’ in something that isn’t human, the stranger we find it.

Hypothesised in the 1970s by Japanese roboticist Masahiro Mori, the uncanny valley usually relates to robots, but can also apply to life-like dolls, computer animations and some medical conditions.

As Dallas stated, everyone likes the Pepper robots because they’re cute but look distinctly ‘robotic’, whereas Kodomoroid freaks us out a little because she looks a lot like us, but isn’t quite ‘us’.

To illustrate the point further, here’s an article that gives us 10 creepy examples of the uncanny valley.

Artificial intelligence is still algorithmic and embryonic

I wrote this down as soon as Danielle said it, as I thought it was a very intelligent quote (unsurprising as it came from someone who’s both super smart and awesome) and I’ll be throwing this into conversations regularly from now on, whether it’s relevant or not.

Danielle was saying that AI is still very much in its infancy, as we’re not really ‘there’ yet with transferrable knowledge. A robot can learn a task and become absolutely world class at performing that task, but it then can’t apply that learning to a new task in a way that a human can.

However, in March 2016 Google’s DeepMind AlphaGo beat the world’s reigning human Go player. During the games, AlphaGo played a number of highly inventive and surprising moves that overturned hundreds of years of received wisdom about the game.

The creativity that AlphaGo displayed wasn’t learnt from a human, it was learnt from principles it taught itself over thousands of hours of playing the game. This is data representation from learning, which is a new concept in AI. And if that wasn’t scary enough…

Someone has developed a robot with wheels AND legs

Yes that’s right, wheels AND legs. Because why have one when you can have both? Whilst making her programme called Rise of the Robots, Danielle visited Boston Dynamics and encountered a robot called Handle, designed to pick up heavy loads and move more quickly with them in tight spaces, which is perfect for a warehouse environment.

© 2018 Boston Dynamics

Whilst at Boston Dynamics, Danielle also met Big Dog, an all-terrain robot with four articulated legs like an animal, and that can absorb shock and recycle energy from one step to the next. Seeing Big Dog in action gave me uncanny valley vibes, as it moves like an animal but doesn’t have a face. Whilst I was slightly jealous of Big Dog’s ability to go out in all weathers (my dog stays in if there’s moisture in the air), I was a bit spooked by a robot that runs at 10km per hour, gets right back up when it’s kicked to the ground and happily trots through snow and water.

The Valkyries ride again

Only this time, they’re heading for Mars. The Valkyrie robot is being developed by NASA to live on Mars and to colonise the planet for us humans.

She can autonomously make decisions, move around and complete tasks. She has a 3D vision system, three fingers and a thumb and was designed to work in environments that are too hazardous for astronauts. She’s a little unsteady on her feet right now, but is being developed to have collision-free movement.

Danielle pointed out that unlike some other service robots, Valkyrie is noticeably female in form because her actuators push her chest out and give her a fairly ample bosom…

Should robots have rights?

An audience member asked at what point would we need to bring in safeguarding measures for AI beings, which sparked a really interesting conversation around ethics and the ‘rights’ of robots.

At the moment, there are guidelines in place to protect humans against the kind of design principles that could, in theory, create a ‘bad’ robot. The British Standards Institute BS 8611: 2016 document is a guide to the ethical design and application of robots and robotic systems.

Robot deception, addiction and self-learning systems exceeding their remits are all noted as hazards that should be considered when designing an automaton, as well as adhering to Asimov’s three laws of robotics:

- A robot may not injure a human being, or, through inaction, allow a human being to come to harm.

- A robot must obey orders given it by human beings, except where such orders would conflict with the First Law.

- A robot must protect its own existence, as long as such protection does not conflict with the First or Second Law.

But what about protecting the robot itself? As Danielle pointed out, the robots themselves are neutral; it’s what we as humans do with them that takes it into another dimension.

Adam suggested that if the robot couldn’t feel pain, then it didn’t necessarily need to be granted any rights. Which makes sense, but left me feeling like this was an area that hasn’t yet been fully considered.

We won’t be having cyborg babies any time soon

Mainly because human battery life is far superior to that of a robot. Apologies to the disappointed audience member who eagerly asked this question!

Dallas Campbell is still a man of the people

When the discussion moved to voice-activated devices around the home (Alexa, Siri etc) Dallas Campbell scoffed at the adverts that promote said device switching on the kettle for you.

As Dallas pointed out, you still have to fill the kettle with water, so is it really that much of an effort to flick the switch?

Despite being off the telly, Dallas Campbell is clearly still ‘one of us’ and doesn’t have a robot making his brews. Yet.

Listen again to the event here: