There are several good reasons why you might want to emulate an older computer system on a newer one. You might want to use old programs, for a start: the 70-year history of the programmable computer has led to an unimaginable volume of software, but most of it is only available on computers that are now sitting in museums, or simply don’t exist.

Of course, any individual program can be converted to work on computers other than the one for which it was originally designed (that’s why you can still play Tetris, even if you don’t have access to a Soviet Elektronika-60.) But with an emulator you get the use of all the software that was written for the original machine, rather than just one program; and you also get the ability to open files that you or someone else created using it.

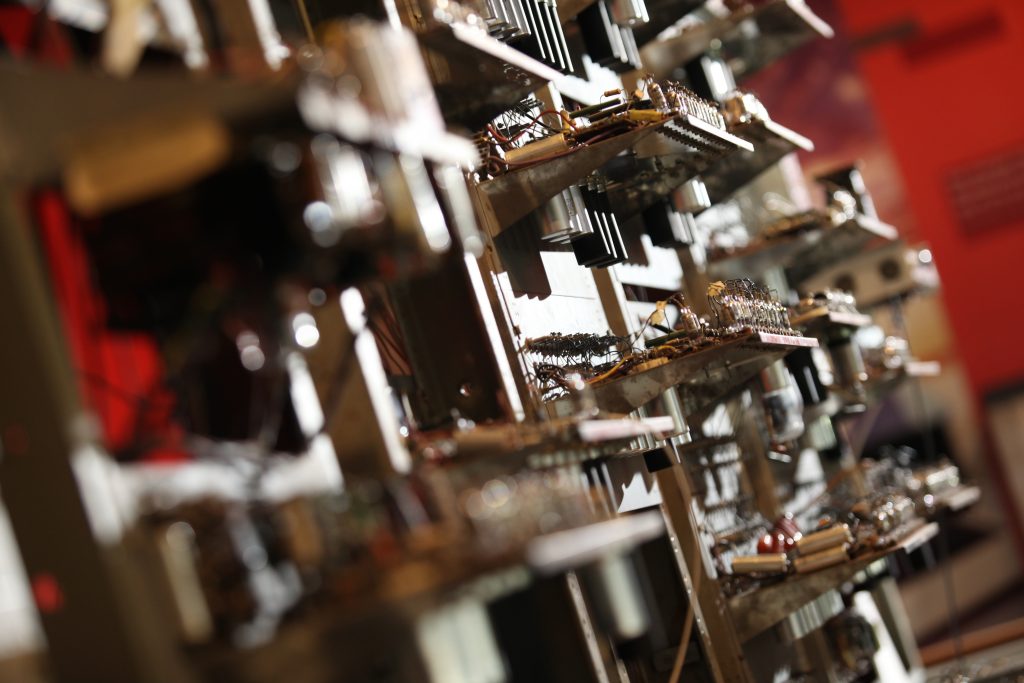

Science Museum Group © The Board of Trustees of the Science Museum

This is oversimplifying slightly: you will probably also need some way of reading your programs and data from punched cards or magnetic tape or whatever other storage media the old computer used (or at least someone will). But, all the same, it is easy to see why emulators can be useful for running old programs that nobody may ever bother to re-implement. And, it is no surprise that computer manufacturers down the years have sometimes themselves provided software or hardware emulation of their older machines as a way of helping customers decide to switch to the latest, but incompatible, model.

Another reason to use an emulated version of an old computer might be for educational or self-educational purposes. Modern computers and their operating systems are, by the standards of a few decades ago, ferociously complex but that complexity is very largely hidden from the user (except when things go badly wrong); however, it cannot be entirely hidden from people who are interested, say, in learning to program in machine code or assembly language—the low-level instructions that the processor actually performs.

Such people will often find it helpful to start out on an older and less powerful computer, one where the instruction set is smaller and the memory map is simpler and there are fewer levels of abstraction, and only afterwards (if at all) apply what they have learnt to today’s machines.

And a third reason is simple nostalgia. Apart from wanting to use any specific application or play any specific antique video game, and even if we are entirely innocent of the desire to program in machine code, there can be a pleasure just in seeing again the blinking cursor and the cryptic error messages that we used to see when we were all younger. That, too, can be a reason to use an emulator.

Science Museum Group © The Board of Trustees of the Science Museum

But none of these reasons seems to apply very strongly in the case of the first programmable electronic computer of them all, the Manchester Small-Scale Experimental Machine (SSEM) or ‘Baby’. Baby was in use for only a short time; the size of the programs it could run was very limited; and it was the only one. As a result, not many programs for Baby were ever written—and certainly none that could not be replicated effortlessly on a modern (or even a not especially modern) computer. No-one is likely to bother with a Baby emulator on the basis that some program they would like to use is available on Baby and not on other systems.

And the educational motivation is not terribly convincing either. However useful a smaller and simpler machine may sometimes be for instructional purposes, Baby will almost certainly seem too small and too simple. Its store, or memory, only had room for 32 ‘words’, each of which could hold either a number or a single instruction; and its machine language consisted of only seven elementary instructions.

What is worse, the seven seem eccentrically chosen. There is an instruction to subtract, but not to add. The internal register that tells the computer which instruction to perform next is incremented after the current instruction has been completed, not before. This means, if you tell the machine ‘jump to the instruction in storage cell 15 and carry on from there’, it will set its register to 15—and then immediately move onto 16, possibly resulting in being one instruction ahead of where you intended.

However, you cannot even tell it directly to jump to 15 (or 16): the instruction needs to take the form ‘jump to the cell whose number is stored in cell 30’, say, and you have to make sure cell 30 is storing a 14 (or a 15). Every conditional operation—every ‘if… then’—has to be expressed using the single Test instruction: ‘skip the next instruction if the number stored in the accumulator is negative’. You would probably expect the first modern computer to have a fairly limited repertoire of operations; but Baby’s order code is not just limited. It is crabbed, improbable, perverse.

The binary numbers that the machine uses to encode its instructions and data are back-to-front: unlike every other positional system in the world, Baby binary stores the least significant digit on the left and the most significant digit on the right. In normal binary you count 1 (one), 10 (one two and no ones), 11 (one two and one one), 100 (one four, no twos, no ones), and so on—but on Baby you count 1 (one), 01 (no ones and one two), 11 (one one and one two), 001 (no ones, no twos and one four).

These oddities are not purely willful: in many cases, I think they were adopted because they were easier to implement in hardware than the alternatives, and Baby was always envisaged as a testbed and proof of concept rather than as a machine that would be used for practical work. However, they do probably make it too awkward to be a suitable learning platform for people who hope to pick up the rudiments of machine code programming. Finally, as there are probably very few people alive who would know how to operate Baby, it leaves the question of nostalgia as quite an unlikely one.

The difficulties with programming Baby are real, but not impossible to overcome. The idea of a computer that cannot add is not as frightening as it sounds. It can certainly add—it just needs to be told how to. We start by observing that x + y is equal to -(-x – y): if you aren’t sure of it, try multiplying both sides by -1 and you will end up with –x – y = –x – y. This means addition can be carried out in terms of subtraction, provided we can also use negation.

Does Baby have a ‘negate’ instruction? It does. In fact—gasp at the designers’ audacity!—it offers no way of getting a number into the accumulator (the machine’s working register) without negating it in the process. So, a program to add two numbers can begin by loading the negative of x into the accumulator, and then subtracting y. We now have the negative of the answer we are looking for: so, we write it into some convenient storage cell z, then read it back into the accumulator—negating it again and leaving us with a positive number. If necessary, we can write that result back into z, meaning it will be available further on in the program; or we can stop there. Other, more sophisticated operations can similarly be built up by combining the machine’s seven basic instructions.

It may be thought, however, that there are limits to how sophisticated these will ever be. Surely there are things we can do with modern computers that would be simply impossible on such a restricted machine as Baby? In practice, this is definitely true: the tiny storage space (an eighth of a kilobyte, in today’s terminology) makes sure of that. Even a comparatively simple problem like multiplying two whole numbers takes a program that almost fills Baby’s memory—at least it does if you want a good multiplication program, one that will still produce the correct answer whether the numbers it has to multiply are positive, negative, or zero. But there is an important theoretical sense in which the idea that bigger and better computers can do things Baby can’t is actually not true at all.

One of the fundamental theories of computer science is that, aside from considerations of speed and memory size, every computer ever constructed is at most as powerful as a Turing machine—the abstract mathematical model of a computer that Alan Turing put forward in 1936. There is no problem whatsoever that a Turing machine could not solve and the latest supercomputer could, assuming they were given both unlimited time and memory to work with. And it turns out that a computer has the same power (always ignoring speed and memory) as a Turing machine if it can do a few quite simple things. That being: it needs to be able to loop, or perform the same sequence of instructions repeatedly; and it needs to be able to branch, or select different sequences of instructions to preform based on some condition.

Baby can do both. Its Test, or ‘skip one if negative’, instruction is designed specifically to allow branching (probably with a ‘jump (somewhere)’ instruction in the cell that is to be either skipped or not skipped); and, if the target for the conditional branch is set as some earlier point in the program, then we have looping too. In fact, multiplication is implemented using looping and branching. But the important point is that Baby’s machine code, counterintuitive though it may be, is nonetheless sufficiently powerful that it can in principle express any computation that can be expressed in any other ‘Turing-complete’ programming language. The only restrictions on Baby’s ability to run any program that could be run on any computer that has ever existed are speed and memory.

This is the essence of Baby’s claim to have been the first modern universal computer. There were faster calculating machines before it, with a richer suite of basic operations; but they could not be freely reprogrammed to solve any computable problem, the way Baby could. There were more expressive programming languages; but they existed as formalisms on paper, rather than being implemented in circuitry. Baby was the first electronic computer that was logically equivalent to the electronic computers we use today.

And this concept of Turing completeness is actually the mathematical basis for why emulating one computer on another is even possible. You could perhaps imagine a world in which universal computers did not exist, meaning all computing machines were special-purpose devices with instruction sets adapted for specific algorithms or tasks. In that world, each particular special-purpose computer might be able to perform some operations that could not be made out of any combination of the operations supported by a different special-purpose computer. They would not, in general, be able to emulate one another. The Church-Turing thesis, named for Turing and for the US mathematical logician Alonzo Church, says that that is not the world we are living in; and, beginning on 21 June 1948 with the first successful run of the Manchester Baby, this idea has gradually moved out of the realm of pure theory and become a part of our everyday experience.

So, there is a sense in which the best answer to the question ‘Why emulate the Manchester Baby?’ is: because you can. Because it was the first electronic machine to be logically equivalent to the computers you use for work, study, and leisure today. Because, if it had the time and space, it could emulate your computer too. And because grappling with its fiddly, limited set of only seven instructions can bring some central questions of the theory of computation vividly to life. There are, as a matter of fact, deliberately obscure programming languages and minimalistic instruction sets that have been designed to achieve Turing completeness with only a tiny repertoire of operations; but they all have a degree of artificiality, and few of them are really more obscure—or even much more minimal—than Baby’s code, which for a moment was the most powerful and expressive computer language in the world.

But it should also be pointed out that programming a Baby emulator can be both frustrating and delightful, like a good puzzle. The machine’s tiny memory and tiny instruction set are potentially universal, but also tightly restrictive; you will beam, when you see a way to save a word of storage by using the same number as an integer constant and as a jump target; you will groan, or swear like a trooper, when you realize you’ve missed out a ‘store’ instruction and you need to push the addresses of all your variables back by one. Occasionally you will astonish yourself with what the machine can do: one of the sample programs provided with JsSSEM, my web-based emulator, is a playable game—the iterated Prisoner’s Dilemma—with an AI opponent that plays according to Anatol Rapoport’s tournament-winning Tit for Tat strategy. (The credit is of course Rapoport’s, for devising a strategy so simple and elegant that fitting it into Baby is even conceivable.) More often, you will be infuriated by how difficult it is to make it do anything at all: my Prisoner’s Dilemma program contains one little passage that could easily be implemented more transparently and efficiently, but I only noticed it when I had finally got the thing working—and I was certainly not prepared to start tinkering with it all over again. Why emulate the Manchester Baby? There are times I’ve asked myself the same question.

But my real answer is: because it’s historically important, it’s theoretically illuminating, and it can even sporadically be fun. And those aren’t the worst reasons.

Click here to try your hand at the online Baby emulator. Visit the world’s only working replica of Baby at the Museum of Science and Industry.